Benchmarking the Mainstream Open Source Distributed Graph Databases

The deep learning and knowledge graph technologies have been developing rapidly in recent years. Compared with the "black box" of deep learning, knowledge graphs are highly interpretable, thus are widely adopted in such scenarios as search recommendations, intelligent customer support, and financial risk management.

Meituan has been digging deep in the connections buried in the huge amount of business data over the past few years and has gradually developed the knowledge graphs in nearly ten areas, including cuisine graphs, tourism graphs, and commodity graphs. The ultimate goal is to enhance the smart local life.

Compared with the traditional RDBMS, graph databases can store and query knowledge graphs more efficiently. It gains obvious performance advantage in multi-hop queries to select graph databases as the storage engine. Currently, there are dozens of graph database solutions out there on the market.

It is imperative for the Meituan team to select a graph database solution that can meet the business requirements and to use the solution as the basis of Meituan's graph storage and graph learning platform. The team has outlined the basic requirements as below per our business status quo:

- It should be an open-source project which is also business-friendly

By having control over the source code, the Meituan team can ensure data security and service availability.

- It should support clustering and should be able to scale horizontally in terms of both storage and computation capabilities

The knowledge graph data size in Meituan can reach hundreds of billions of vertices and edges in total and the throughput can reach tens of thousands of QPS. With that being said, the single-node deployment cannot meet Meituan's storage requirements.

- It should work under OLTP scenarios with the capability of multi-hop queries at the millisecond level.

To ensure the best search experience for Meituan users, the team has strictly restricted the timeout value within all chains of paths. Therefore, it is unacceptable to respond to a query at the second level.

- It should be able to import data in batch

The knowledge graph data is usually stored in data warehouses like Hive. The graph database should be equipped with the capability to quickly import data from such warehouses to the graph storage to ensure service effectiveness.

The Meituan team has tried the top 30 graph databases on DB-Engines and found that most well-known graph databases only support single-node deployment with their open-source edition, for example, Neo4j, ArangoDB, Virtuoso, TigerGraph, RedisGraph. This means that the storage service cannot scale horizontally and the requirement to store large-scale knowledge graph data cannot be met.

After thorough research and comparison, the team has selected the following graph databases for the final round: Nebula Graph (developed by a startup team who originally came from Alibaba), Dgraph (developed by a startup team who originally came from Google), and HugeGraph (developed by Baidu).

A Summary of The Testing Process

Hardware Configuration

- Database instances: Docker containers running on different machines

- Single instance resources: 32 Cores, 64 GB Memory, 1 TB SSD (Intel(R) Xeon(R) Gold 5218 CPU @ 2.30 GHz)

- Number of instances: Three

Deployment Plan

Nebula Graph v1.0.1

The Meta Service is responsible to manage cluster metadata. The Query Service is responsible for query execution. And the Storage Service is responsible for storing shared data. RocksDB acts as the storage backend. See the details below:

| Instance No.1 | Instance No.2 |

Instance No.3 |

Metad |

Metad |

Metad |

| Graphd | Graphd | Graphd |

Storaged[RocksDB] |

Storaged[RocksDB] |

Storaged[RocksDB] |

Dgraph v20.07.0

Zero is responsible for cluster metadata management. Alpha is responsible for query execution and data storage. The storage backend is developed by Dgraph. See the details below:

| Instance No.1 | Instance No.2 | Instance No.3 |

| Zero | Zero | Zero |

| Alpha | Alpha | Alpha |

HugeGraph v0.10.4

HugeServer is responsible for cluster metadata management and query execution. Although HugeGraph supports RocksDB as a storage backend, it doesn't support RocksDB as the storage backend for a cluster. Therefore, the team chooses HBase as the storage backend instead. See the details below:

| Instance No.1 | Instance No.2 | Instance No.3 |

| HugeServer[HBase] | HugeServer[HBase] | HugeServer[HBase] |

| JournalNode | JournalNode | JournalNode |

| DataNode | DataNode | DataNode |

| NodeManager | NodeManager | NodeManager |

| RegionServer | RegionServer | RegionServer |

| ZooKeeper | ZooKeeper | ZooKeeper |

| NameNode | NameNode[Backup] | |

| ResourceManager | ResourceManager[Backup] | |

| HBase Master | HBase Master[Backup] |

The Dataset Used for the Benchmarking Test

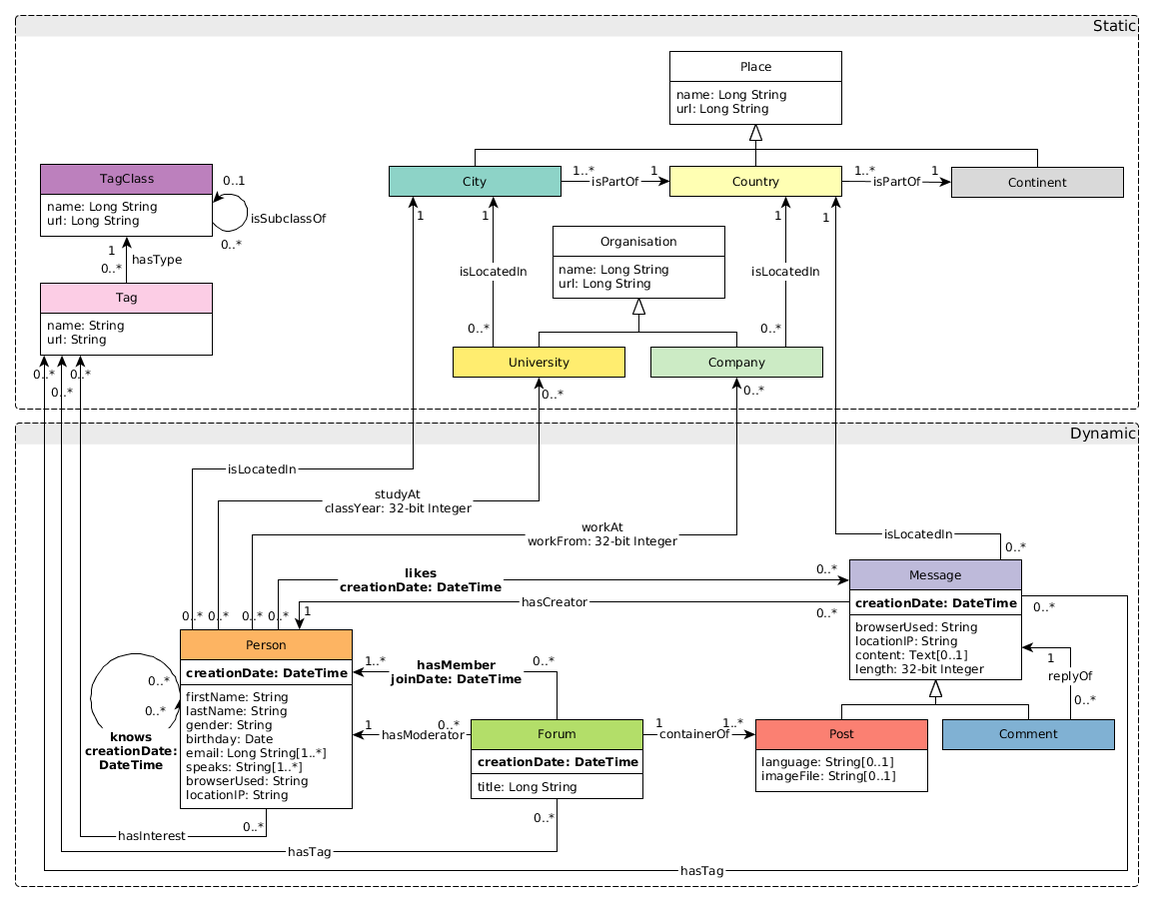

The team uses the LDBC dataset for the benchmarking test.

Below is a brief introduction to the data within the dataset:

- Data generation parameters: branch=stable, version=0.3.3, scale=1000

- Entities: 2.6 billion entities of four types

- Relationships: 17.7 billion relationships of 19 types

- Data format: CSV

- The data size after compaction: 194 GB

Benchmarking Test Results

The benchmarking test has been conducted from three perspectives, i.e. data import in batch, real-time data write, and data query.

Data Import in Batch

The steps of data import in the batch are as follows:

- Generate CSV files in Hive

- Middle files supported by graph databases

- Import data to graph databases

The data import process in each graph database is described below:

- To Nebula Graph. Execute the Spark task in Hive to generate SST files in RocksDB, and then ingest the SST files to Nebula Graph.

- To Dgraph. Execute the Spark task in Hive to generate RDF files, and then execute the bulk load operation to generate the persistent files for each node of the cluster.

- To HugeGraph. Execute the loader to directly insert data to HugeGraph in CSV files from Hive.

Data Import Testing Results

| Database | Import Method | Time Consumed Each Step | Occupation Before Import (gziped) | Occupation After Import | Space Amplification Ratio | Load Blance Among Nodes |

| Nebula Graph | 1. Hive -> SST files 2. SST files -> DB |

1. 3 h 2. 0.4 h |

194 G | 518 G | 2.67x | 176 G / 171 G / 171 G Balanced among Nodes |

| Dgraph | 1. Hive -> RDF files 2. RDF files -> DB |

1. 4 h 2. 8.7 h OOM |

4.2 G |

24 G Imported user relationships only for Space Amplification Test. |

5.71x | 1 G / 1 G / 22 G Not balanced among Nodes |

| HugeGraph | 1. Hive -> CSV 2. CSV -> DB |

1. 0 h 2. 9.1 h Out of Disk Space |

4.2 G Cannot be imported in full size. |

41 G Imported user relationships only for Space Amplification Test. |

9.76x | 11 G / 5 G / 25 G Not balanced among Nodes |

Seen from the above results, the team found that Nebula Graph performs the best because it has the lowest time consumption as well as the smallest storage amplification ratio. The data is distributed by primary key hash and the storage is balanced among the nodes.

In Dgraph, the original data size is 194 GB and the server memory is 392 GB; the bulk load operation was suspended after 8.7 hours due to OOM, which resulted in partial success. The data is distributed by the predicates in the RDF model and one type of relationship can only be stored on the same node, which resulted in a severe unbalance of storage and computation among nodes.

In HugeGraph, the original data size is 194GB. When the data import operation was executed, a node of 1000GB was full and the import was partially successful. The storage amplification ratio in HugeGraph is the largest and data distribution is severely unbalanced among nodes.

Real-Time Data Write

This test was to insert vertices and edges to graph databases in real-time and to test the write performance of each database. Below is a brief description of how the metrics are defined.

- Response time. Send 50,000 write requests at a fixed QPS and record the time consumed for sending all the requests successfully. Obtain the time consumed from when a request is sent to when a response is received on the client side in terms of avg, p99, and p999.

- The largest throughput. Send 1,000,000 write requests at a gradually increasing QPS and keep querying the data. The peak QPS (successful requests) within one minute is the largest throughput.

How to Insert Nodes to Graph Databases

Nebula Graph

INSERT VERTEX t_rich_node (creation_date, first_name, last_name, gender, birthday, location_ip, browser_used) VALUES ${mid}:('2012-07-18T01:16:17.119+0000', 'Rodrigo', 'Silva', 'female', '1984-10-11', '84.194.222.86', 'Firefox')

Dgraph

{

set {

<${mid}> <creation_date> "2012-07-18T01:16:17.119+0000" .

<${mid}> <first_name> "Rodrigo" .

<${mid}> <last_name> "Silva" .

<${mid}> <gender> "female" .

<${mid}> <birthday> "1984-10-11" .

<${mid}> <location_ip> "84.194.222.86" .

<${mid}> <browser_used> "Firefox" .

}

}

HugeGraph

g.addVertex(T.label, "t_rich_node", T.id, ${mid}, "creation_date", "2012-07-18T01:16:17.119+0000", "first_name", "Rodrigo", "last_name", "Silva", "gender", "female", "birthday", "1984-10-11", "location_ip", "84.194.222.86", "browser_used", "Firefox")

How to Insert Edges to Graph Databases

Nebula Graph

INSERT EDGE t_edge () VALUES ${mid1}->${mid2}:();

Dgraph

{

set {

<${mid1}> <link> <${mid2}> .

}

}

HugeGraph

g.V(${mid1}).as('src').V(${mid2}).addE('t_edge').from('src')

Real-Time Write Testing Results

Response Time

| Real-Time Write Response Time (ms) |

Insert Vertices at QPS=100 avg / p99 / p999 |

Insert Edges at QPS=100 avg / p99 / p999 |

| Nebula Graph | 3 / 10 / 38 | 3 / 10 / 34 |

| Dgraph | 5 / 13 / 54 | 5 / 9 / 43 |

| HugeGraph | 37 / 159 / 1590 | 28 / 93 / 1896 |

The Largest Throughput

| Real-Time Write the Largest Throughput |

Insert Vertices QPS |

Insert Edges QPS |

| Nebula Graph | 84000 | 76000 |

| Dgraph | 10138 | 9600 |

| HugeGraph | 410 | 457 |

Real-Time Write Testing Results Analysis

Seen from the above results, the response time and throughput of Nebula Graph are leading the pack in the test because the write requests can be distributed to multiple storage nodes thanks to its architecture.

In Dgraph, the response time and throughput are not good compared with Nebula Graph because one type of relationship can be stored on the same node per its structure.

HugeGraph performs the worst in terms of response time and throughput because the storage backend is HBase which has lower concurrent read and write capability than RocksDB (adopted by Nebula Graph) and BadgerDB (adopted by Dgraph).

Data Query

The data query benchmarking was to test the read performance of the graph database candidates and it was based on the following common queries: n-hop queries with ID returned, n-hop queries with properties returned, and shared friends query. Below is a brief description of how the metrics are defined.

- Response time. Send 50,000 read requests at a fixed QPS and record the time consumed for sending all the requests. Obtain the time consumed from when a request is sent to when a response is received on the client side in terms of avg, p99, and p999. If no response is received within 60s after a read request is sent, then a timeout error is returned.

- The largest throughput. Send 1,000,000 read requests at a gradually increasing QPS and keep querying the data. The peak QPS (successful requests) within one minute is the largest throughput.

- Cache configuration. All graph databases in this test support read from cache and the feature is on by default. The team had cleaned server cache every time before a test was conducted.

Sample Code of N-Hop Queries with ID Returned

Nebula Graph

GO ${n} STEPS FROM ${mid} OVER person_knows_person

Dgraph

{

q(func:uid(${mid})) {

uid

person_knows_person { #${n}Hops = Embedded layers

uid

}

}

}

HugeGraph

g.V(${mid}).out().id() #${n}Hops = out()Length of the link

Sample Code of N-Hop Queries With Properties Returned

Nebula Graph

GO ${n} STEPS FROM ${mid} OVER person_knows_person YIELDperson_knows_person.creation_date, $$.person.first_name, $$.person.last_name, $$.person.gender, $$.person.birthday, $$.person.location_ip, $$.person.browser_used

Dgraph

{

q(func:uid(${mid})) {

uid first_name last_name gender birthday location_ip browser_used

person_knows_person { #${n}Hops = Embedded layers

uid first_name last_name gender birthday location_ip browser_used

}

}

}

HugeGraph

g.V(${mid}).out() #${n}Hops = out()Length of the link

Sample Code of Shared Friends Queries

Nebula Graph

GO FROM ${mid1} OVER person_knows_person INTERSECT GO FROM ${mid2} OVER person_knows_person

Dgraph

{

var(func: uid(${mid1})) {

person_knows_person {

M1 as uid

}

}

var(func: uid(${mid2})) {

person_knows_person {

M2 as uid

}

}

in_common(func: uid(M1)) @filter(uid(M2)){

uid

}

}

HugeGraph

g.V(${mid1}).out().id().aggregate('x').V(${mid2}).out().id().where(within('x')).dedup()

Testing Results of N-Hop Queries with ID Returned

| N-Hop Queries with ID Returned Response Time (ms) |

N = 1, QPS = 100 avg / p99 / p999 |

N = 2, QPS = 100 avg / p99 / p999 |

N = 3, QPS = 10 avg / p99 / p999 |

| Nebula Graph | 4 / 13 / 45 | 24 / 160 / 268 | 1908 / 11304 / 11304 |

| Dgraph | 4 / 12 / 30 | 93 / 524 / 781 | Timeout |

| HugeGraph | 28 / 274 / 1710 | Timeout | Timeout |

N-Hop Queries with ID Returned The Largest Throughput (QPS) |

N = 1 Avg Neighbors Returned = 62 |

N = 2 Avg Neighbors Returned = 3844 |

N = 3 Avg Neighbors Returned = 238328 |

| Nebula Graph | 80830 | 6950 | 32 |

| Dgraph | 8558 | 100 | Timeout |

| HugeGraph | 804 | Timeout |

Timeout |

Testing Results of N-Hop Queries with Properties Returned

The average size of the properties on a single vertex is 200 Bytes.

N-Hop Queries with Properties Returned Response Time (ms) |

N = 1, QPS = 100 avg / p99 / p999 |

N = 2, QPS = 100 avg / p99 / p999 |

N = 3, QPS = 10 avg / p99 / p999 |

| Nebula Graph | 5 / 20 / 49 | 99 / 475 / 1645 | 48052 / >60s / >60s |

| Dgraph | 50 / 210 / 274 | Timeout |

Timeout |

| HugeGraph | 29 / 219 / 1555 | Timeout |

Timeout |

N-Hop Queries with ID Returned The Largest Throughput (QPS) |

N = 1 Avg Neighbors Returned = 62 |

N = 2 Avg Neighbors Returned = 3844 |

N = 3 Avg Neighbors Returned = 238328 |

| Nebula Graph | 36400 | 730 | 2 |

| Dgraph | 80 | Timeout |

Timeout |

| HugeGraph | 802 | Timeout |

Timeout |

Testing Results of Shared Friends Queries

This test didn't include the largest throughput.

Shared Friends Queries Response Time (ms) |

QPS = 100 avg / p99 / p999 |

| Nebula Graph | 4 / 20 / 42 |

| Dgraph | 4 / 13 / 36 |

| HugeGraph | 39 / 366 / 1630 |

Data Query Testing Results Analysis

In the test of response time (latency) of one-hop queries with ID returned, both Nebula Graph and Dgraph need to search the outgoing edges once. Due to the storage architecture in Dgraph, edges of the same type are stored in the same node. Therefore, there is no network consumption for one-hop queries in Dgraph. In Nebula Graph, edges are distributed to multiple nodes, which is why Dgraph performed a little bit better than Nebula Graph in this test.

In the test of throughput (QPS) of one-hop queries with ID returned, the CPU load of the cluster was restricted by the single node where the edges were stored, which resulted in low CPU occupation ratio in the cluster. Therefore, the largest throughput of Dgraph is only 11% of that of Nebula Graph.

In the test of response time (latency) of two-hop queries with ID returned, due to the same reason stated above, the actual load in Dgraph nearly reached the upper limit of the cluster when QPS was set to 100. Therefore, the latency in Dgraph was lengthened significantly to 3.9x of that of Nebula Graph.

In the test of one-hop queries with properties returned, Nebula Graph stored all properties on vertices as a data structure in a single node. Therefore, the number of searches was equal to the number of outgoing edges Y. In Dgraph, properties on vertices were considered as outgoing edges and were distributed to different nodes. Therefore, the number of searches was equal to the product of X, i.e. the number of properties, and Y, i.e. the number of outgoing edges. For this reason, the performance of Dgraph is worse than Nebula Graph. The same rule applies to multi-hop queries.

The test of shared friends queries was nearly the same as the test of two one-hop queries with ID returned. Therefore, the results of the two tests were quite similar.

HugeGraph's storage backend is HBase, which has lower concurrent read and write capability than RocksDB (adopted by Nebula Graph) and BadgerDB (adopted by Dgraph). Therefore, HugeGraph performed worse than the other two in terms of response time and throughput.

Conclusion

The Meituan team has finally selected Nebula Graph as our graph storage engine because it has outperformed the other two candidates in terms of batch data import speed, real-time write performance, and n-hop query performance.

References

- Nebula Graph Benchmark:https://discuss.nebula-graph.io/t/nebula-graph-1-0-benchmark-report/581

- Graph Database Performance Comparison by the Tencent Cloud team:https://discuss.nebula-graph.io/t/performance-comparison-neo4j-vs-nebula-graph-vs-janusgraph/619

- DGraph Benchmark:https://dgraph.io/blog/tags/benchmark/

- TigerGraph Benchmark:https://www.tigergraph.com/benchmark/

- RedisGraph Benchmark:https://redislabs.com/blog/new-redisgraph-1-0-achieves-600x-faster-performance-graph-databases/